The assumption most teams carry into launching an insight community is that size equals value. Get enough members in, keep them active enough and the insights will follow. In practice, the communities that deliver the most consistently useful data tend to be smaller, more purposeful and more carefully designed than most people expect.

An insight community isn't a panel you pull from. It's a relationship you build — and like most meaningful relationships, the ones that last are built with intention from the very first moment.

This guide covers what high-performing insight communities actually look like in practice: how they're recruited, how they're structured, what keeps members genuinely engaged over months and years and how research teams turn ongoing community participation into decisions that land with stakeholders.

New to the concept?

Start With the Mandate, Not the Member Count

Before a single recruit is invited, the most important question is one that most teams skip: what does this community need to do that a series of one-off studies can't?

Communities are a long-term investment in access — ongoing access to a specific group of people who know your brand, your products or your category well enough to give you informed, longitudinal perspective. That's a fundamentally different kind of data than what you get from a fresh panel recruited for a single project.

But access is only valuable if it's pointed at something. Communities without a clear mandate drift. Members get asked questions that don't seem to connect to anything. Participation drops. The research team struggles to justify the ongoing cost. By the time anyone asks "what is this community actually for?" — it's already in trouble.

Define the mandate before you recruit. What business questions will this community help answer over the next twelve to eighteen months? Who inside your organization will use this data and how? What would success look like — and how will you know when you've achieved it?

That mandate becomes the brief for everything that follows: who you recruit, what you ask them and how often you need to hear from them.

Recruit for Fit, Not for Volume

Community recruitment is where most programs make their most expensive mistake. Chasing numbers — a bigger panel, broader reach, more respondents to draw from — produces communities full of people who aren't especially invested in the topic, the brand or the research process. Participation rates suffer. Quality suffers. The data looks comprehensive but it's shallow.

The recruitment brief for a community should look nothing like the recruitment brief for a survey. You're not looking for demographic representation alone. You're looking for people who have a real relationship with the subject matter and enough genuine interest to participate more than once.

That means being more selective at the recruitment stage — and being honest with candidates about what participation involves. A member who joins knowing they'll be asked to contribute to activities over six months is a far better community member than one who thought they were signing up for a one-time survey.

Screeners for community recruitment should test engagement and articulacy, not just demographic fit. Open-ended questions reveal which candidates can and will contribute meaningfully. That's the filter that matters.

The Onboarding Moment You Can't Get Back

The first activity a new member completes in a community sets the tone for everything that follows. It's the moment they decide — consciously or not — whether participating here is worth their time.

Most onboarding activities are too passive: a welcome message, a profile completion prompt, a background survey. These feel administrative. They don't make members feel like contributors. They don't establish what kind of community this is going to be.

The best onboarding activities do three things simultaneously: they gather useful data, they make the member feel heard and they signal that their perspective is genuinely valued. An activity that asks for a real opinion on a real question — something the research team will actually act on — delivers all three. It tells the member: this isn't a passive panel, this is a conversation.

Equally important is what happens after the first activity. Closing the feedback loop early — even a brief summary of what the team heard from the community in the first round of questions — builds the habit of participation. Members who see their input acknowledged are significantly more likely to come back for the next activity.

Activity Design: How to Earn Participation Over Time

Member engagement doesn't erode because people lose interest in research. It erodes because the activities stop feeling relevant, varied or worth the effort.

Activity design is the ongoing creative challenge at the heart of community management. The goal is to give members something genuinely interesting to respond to — questions that are specific enough to feel meaningful, framed in a way that invites real answers rather than safe ones.

A few principles that hold across the best-performing communities:

Vary the format. Discussion forums, image uploads, video responses, polls, diary tasks, idea boards — rotating the format prevents the fatigue that comes from being asked the same kind of question in the same kind of way, every single time. Different formats also surface different kinds of insight: what someone says in a discussion thread and what they show in a photo journal about the same topic can be remarkably different.

Match the ask to the time available. Not every activity can be a forty-minute deep dive. Some of the richest community data comes from short, high-frequency touchpoints — a quick reaction to a new concept, a brief check-in on how something is being used day-to-day. Respecting members' time is the fastest way to keep them coming back.

Make it feel like participation, not obligation. The framing of an activity matters as much as the content. An activity that starts with "We want to understand your experience with..." is going to draw out different energy than one that starts with "We've been thinking about a problem and we want your help."

Keeping a Community Alive Between Studies

One of the most common patterns in community management is the accordion problem: intense activity during a formal study, silence between studies and a measurable drop in engagement every time the cycle repeats. Members who aren't hearing from you aren't thinking about the community — and eventually, they stop responding when you do reach out.

A sustainable community cadence doesn't require constant heavy-lift activities. It requires enough regular contact that membership feels like an ongoing relationship rather than a series of isolated transactions.

Between studies, lightweight touchpoints maintain the connection: a brief check-in about something in the news, a quick reaction to a product update, a community-wide share-back from the last round of research. These don't need to generate publishable data. They need to remind members that they're part of something that values their time consistently — not only when a project is due.

Recognizing members also matters more than most teams expect. Not with elaborate incentive schemes, but with the simple acknowledgement that their contributions have been seen and used. The communities with the highest long-term retention rates tend to be the ones where members feel like named participants in an ongoing conversation — not anonymous voices in a rotating sample.

Turning Community Data Into Organizational Action

The research doesn't end when the community activity closes. It ends when the insight reaches the person who can do something with it — and changes what they do.

This is where many communities quietly fail. The data is rich. The analysis is thorough. The findings get written up into a report that circulates, gets presented once and then lives in a shared drive. Stakeholders move on. The next study launches without reference to what the last one found.

Closing the loop between community insight and organizational action requires a deliberate process — not just for delivering findings but for tracking what happened because of them. Which product decisions were influenced by community data? Which campaigns shifted based on community feedback? Over time, this record becomes the business case for the community's continued investment.

It also requires matching the format of insight delivery to how different stakeholders actually consume information. A summary deck for an executive team, a detailed analysis for a product manager and a verbatim quote pack for a creative team are all drawing from the same community data — formatted for three completely different use cases.

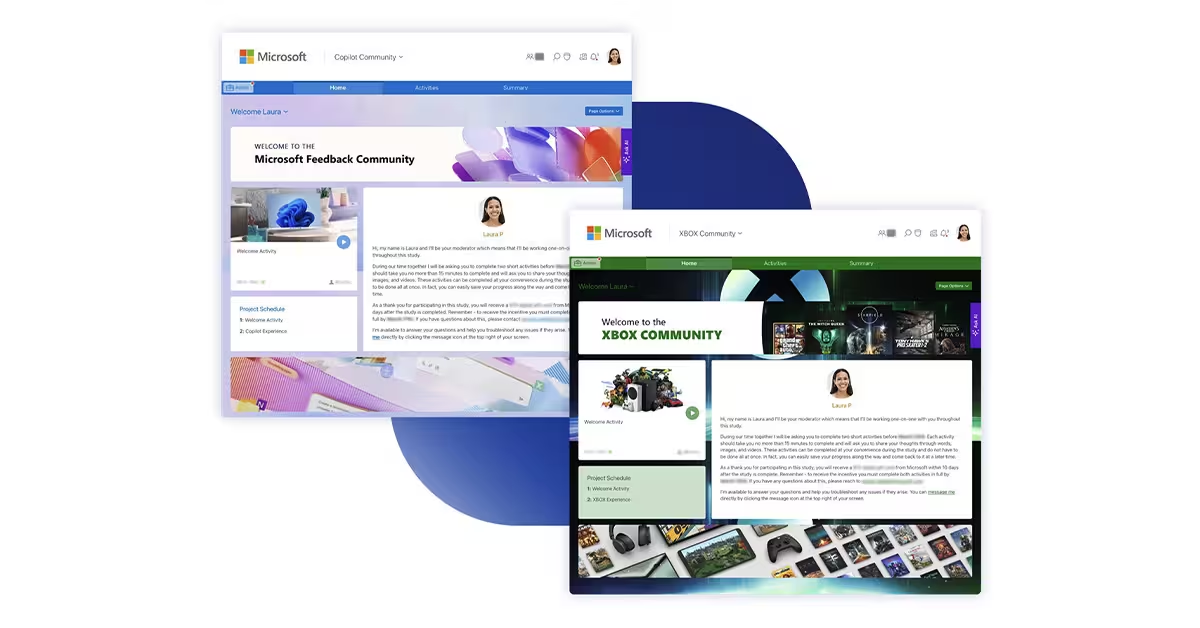

When Microsoft brought qualitative research in-house using Recollective, the shift wasn't just operational — it changed how quickly insights could reach the people making product decisions. Running two simultaneous studies with over 130 participants, their team cut time-to-insight by 80% and delivered findings fast enough that stakeholders could act on them while the context was still fresh. That speed is what turns community data from a nice-to-have into something the organization actually depends on.

Where AI Fits in Community Management

AI hasn't changed what insight communities are for. It's changed what's possible with the data they produce.

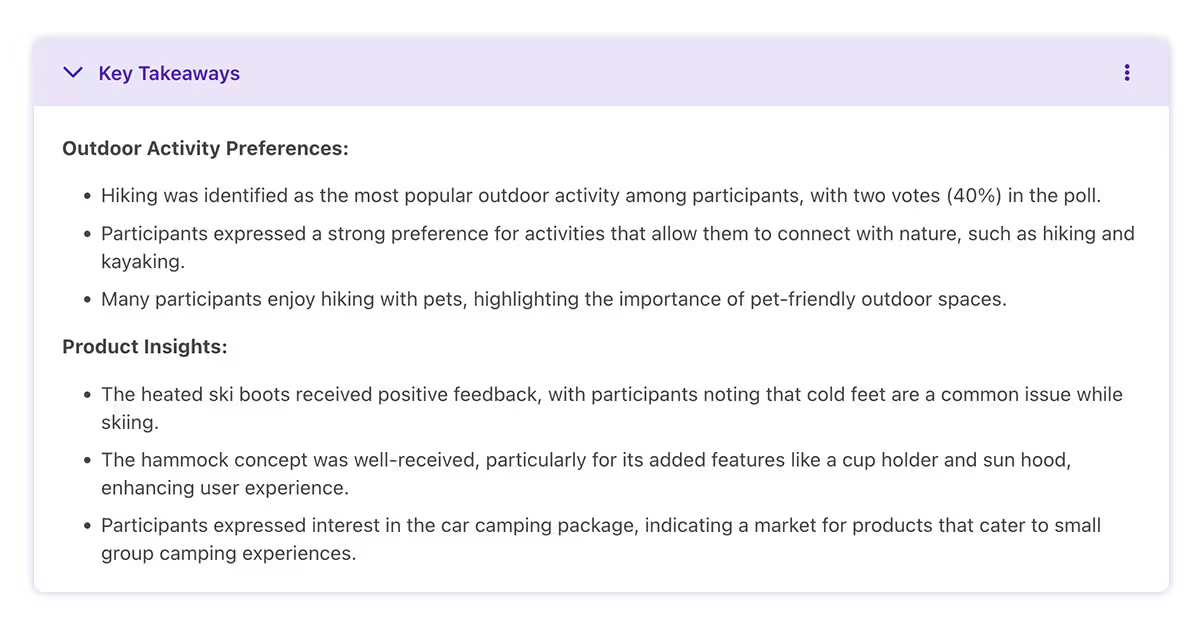

Analysis that once took a team of analysts several days can now be completed in hours — AI theme detection reads across hundreds of member responses simultaneously and surfaces the patterns, tensions and outliers that matter. For communities running ongoing studies, this means faster delivery of insights and more time for the interpretation work that only humans can do.

Transcription and summarisation handle the mechanical processing of video and audio responses automatically. Automatic translation opens communities to multilingual participation without requiring separate analysis workstreams per language. And conversational AI creates the possibility of running in-depth one-on-one activities at a scale that would be impossible with fully moderated sessions.

The right platform integrates these capabilities without requiring researchers to switch tools or rebuild their workflow around the technology. For communities that are already running, the question isn't whether to add AI — it's where in the workflow the biggest bottleneck is right now.

Getting Started

If you're building your first community, the most important investment is time at the mandate stage — before you recruit a single member. A community built around a clear, specific business purpose will outperform a larger community built around a vague one every single time.

If you're managing an existing community that's losing momentum, the fastest diagnostic is participation data by activity type. Where is engagement strongest? Where has it dropped? The pattern usually tells you exactly what needs to change.

Communities that outlast the initial excitement do one thing consistently: they treat their members like contributors rather than respondents. That distinction — subtle on the surface, significant in practice — is what separates the communities still running strong at year five from the ones that quietly wound down at month twelve. The researchers building the former aren't doing more. They're thinking differently about who they're building for and your next study shouldn't be the first time you ask that question.

See how the Recollective qualitative research platform powers insight communities → Request a Demo

.svg)